Usually we realize our wishes, insofar as we do actually realize them, by a feedback process, in which we compare the degree of attainment of intermediate goals with our anticipation of them. In this process, the feedback goes through us, and we can turn back before it is too late.

-Norbert Weiner, God & Golem, Inc. (1964)

There is no good and evil. There is only Balenciaga and those too weak to seek it.

– demonflyingfox, Harry Potter by Balenciaga (2023)

In his 1995 essay collection The Big Question, David Lehman details the “greatest literary hoax of the twentieth century,” in which two disgruntled Australian poets concocted the collected works of Ernest Lalor Malley, all in one very productive Saturday afternoon in autumn 1943.

Fellow soldiers James MacAuley and Harold Stewart, Lehman writes in “The Ern Malley Poetry Hoax,” were driven by their “animus toward modern poetry in general and a particular hatred of the surrealist stuff championed by Adelaide wunderkind Max Harris, the twenty-two-year-old editor of Angry Penguins, a well-heeled journal devoted to the spread of modernism down under.” They tried, in the avant-garde fashion they abhorred, to make bad art, collaging random purloined lines with misquotations, false allusions, and whatever gobbledygook came to mind. “They called their creation Malley because mal in French means bad. He was Ernest because they were not.” Adding injury to insult, they killed off Malley and invented a “suburban sister,” Ethel, to submit Malley’s poems to Angry Penguins “along with a cover letter tinged with her disapproval of her brother’s bohemian ways and proclaiming her own ignorance of poetry.”

Harris bit, dedicating an entire issue to this exciting new Australian voice. The tricksters promptly revealed themselves to the press. And the rest is history.

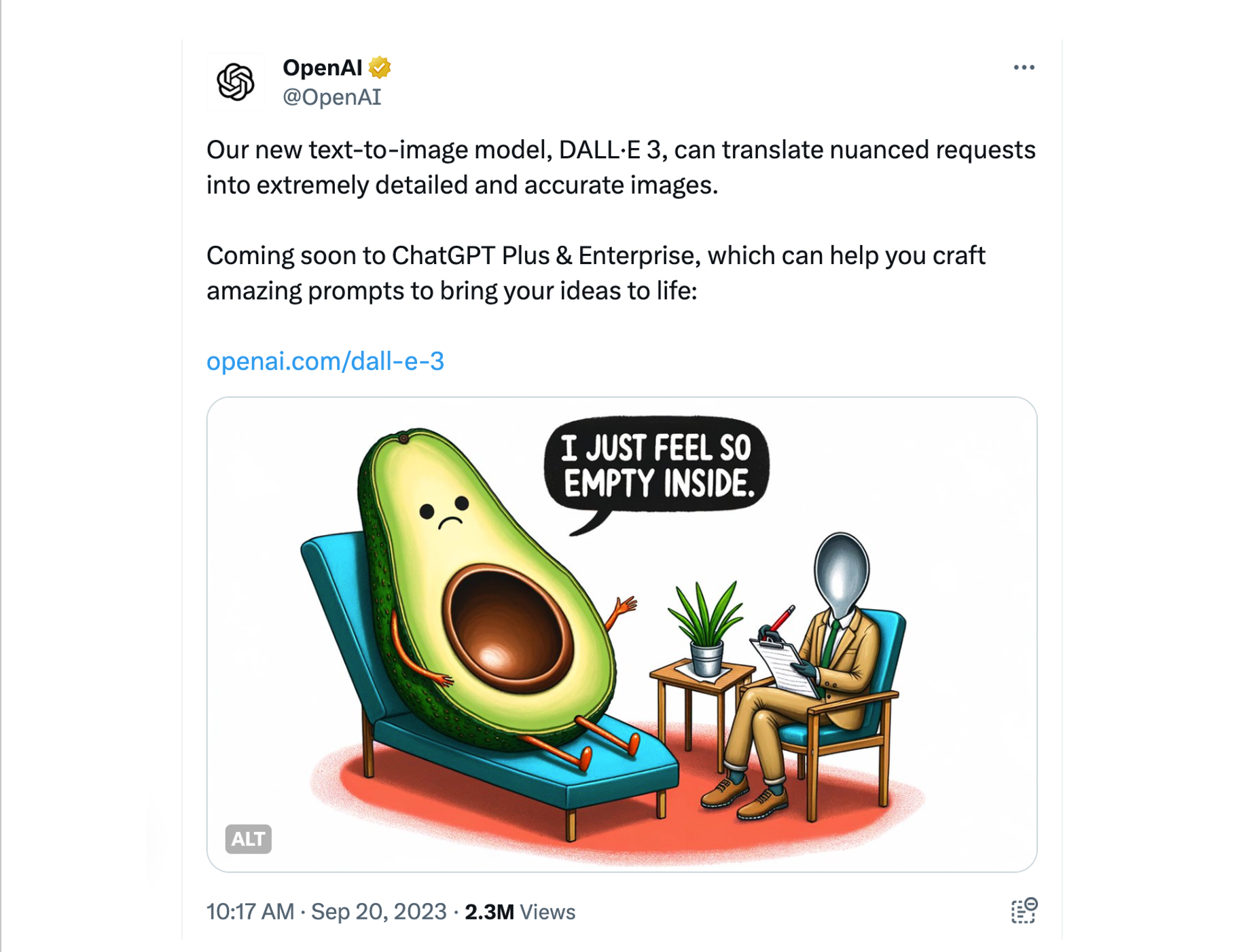

I thought of the Malley affair a few months ago, upon reading that Boris Eldagsen had refused to accept a Sony World Photography Award. The German artist won the “Creative” category in the open competition, only to reveal onstage at the awards ceremony — in an uninvited gotcha moment I imagine MacAuley and Stewart would have appreciated — that he had used the OpenAI image generator DALL-E 2 to generate the winning picture.

A Sony spokesperson parried Eldagsen’s refusal by claiming he had made “deliberate attempts at misleading us” — referring not to his entering an A.I.-generated submission, something at least some of the Sony folks appear to have known, but to his intention to reject the award. It’s a striking reversal of the institutional response to the Malley reveal, which Lehman characterized as “the surprising, and actually quite heroic, intransigence of Max Harris and his cohorts, who maintained in the face of all ridicule their belief in Malley’s genius.” History ultimately agreed with Harris, Lehman notes, pointing to the editors of The Penguin Book of Modern Australian Poetry including Malley’s entire output in their anthology, and before them New York School poets Kenneth Koch and John Ashbery teaching and publishing him:

“Ashbery’s point — and it seems to be Malley’s point — is that intentions may be irrelevant to results, that genuineness in literature may not depend on authorial sincerity, and that our ideas about good and bad, real and fake, are, or ought to be, in flux. […] The Ern Malley affair was the century’s greatest literary hoax not because it completely hoodwinked Harris and not because it triggered off a story so rich in ironies and reversals. It was the greatest hoax because Ern Malley escaped the control of his creators and enjoyed an autonomous existence beyond, and at odds with, the critical and satirical intentions of MacAuley and Stewart.”

I’m struck by Lehman’s granting sentience to Malley, giving the invention a point. It’s tempting to consider the autonomous existence of Eldagsen’s Pseudomnesia: The Electrician, a black-and-white old-timey portrait of two women that bears certain hallmarks of A.I.-generated images, including those vague, creepy “they’re hands if you squint!” mutations. (It’s a made-for-science-fiction detail that our current A.I. tools are so consistently incapable of rendering the parts of human anatomy chiefly responsible for manipulating tools.) The whole thing is like an off-rhyme variation of a Turing test.

But unlike MacAuley and Stewart, Eldagsen stands by his creation, explaining that he finds A.I. deeply exciting, even liberatory, and describing the process with the collaborative moniker “promptography.” He isn’t engaged in parody, but rather in seeking distinctions.

“I’m not interested in winning any prize,” Eldagsen stated on Erik Faarland’s Fotopodden. “I’m interested in debating if it’s a good idea to have A.I.-generated images in photo competitions. And I think you should not mix it up. I think A.I.-generated images are not photography. I think you should have a category for itself. And if it’s good to put them in the same basket, I don’t know. But we need to find out.”

Indeed, Eldagsen claims he only refused the award because of Sony’s repeated refusals-through-avoidance to have a public conversation about the ramifications of his winning the prize. In twenty-first-century media warfare fashion, he published a chronology of their back and forth; the dispiritingly familiar corporate art-speak deployed by the award representatives there and in statements to the press could well have been A.I.-generated. Turns out the Sony World Photography Awards is run by the World Photography Organisation, “a leading global platform dedicated to the development and advancement of photographic culture” that is itself a “strand” of Creo, which has as its mission “developing meaningful opportunities for creatives and expanding the reach of its cultural activities” — including, surprise, surprise, art fairs.

Faced with these usual, repugnant subjects, and also with Eldagsen’s urging that visitors to his site “check out my insta and facebook,” I once again found myself thinking that A.I. isn’t the problem: we are.

As Meghan O’Gieblyn charts in God Human Animal Machine: Technology, Metaphor, and the Search for Meaning, a conversation about artificial intelligence is always, inevitably, a conversation about what it is to be human — a conversation we still don’t know how to have because we can’t reliably study ourselves, having no distance whatsoever from our subject. Neither can we study anything else without implicating ourselves. It is “impossible to understand what [is] happening in the world without taking ourselves and our minds into account,” O’Gieblyn writes, describing the beliefs of pioneering quantum physicist Niels Bohr.

Human consciousness, then, is as much a black box as the inscrutable machine learning technologies we’ve surrounded ourselves with. And art is its own black box within our consciousness — individual and collective — having escaped the control of its creators yet entirely bound up in our exhausting conundrums, subject to endless explanation and interpretation.

There are of course very real and very ugly issues with machines taking over human jobs. That battle has reached the arts, as evidenced by this year’s WGA and SAG-AFTRA strikes in Hollywood and class action lawsuits filed by authors and artists against companies like Midjourney, OpenAI, and Stability AI, whose generative A.I. products were trained on their creative output without their permission — a problem compounded by humans using these programs to explicitly copy the work of individual artists. (In the inevitable next step in our cyborg evolution, these same companies are now hiring human poets to feed these A.I. products “original” material.) But I’m not worried, financial matters aside, that A.I. artists will somehow give human artists an identity crisis — beyond the one we already always have, that is. It strikes me that the far more insidious issue is how current machine learning technologies are affecting us all as we use and consume them.

When I listen to Eldagsen describe “surprising the machine and testing its boundaries,” I can imagine that the process of rendering text to image could have the same gorgeous charge for him as writing does for me. And when Tim Perkis writes of his long career “building somewhat unruly computer-based musical instruments, ‘disobedient’ machines that respond to my gestures, but that don’t necessarily carry out my instructions precisely,” I believe (and have heard proof at his concerts) that the exchange engages him and his audiences deeply.

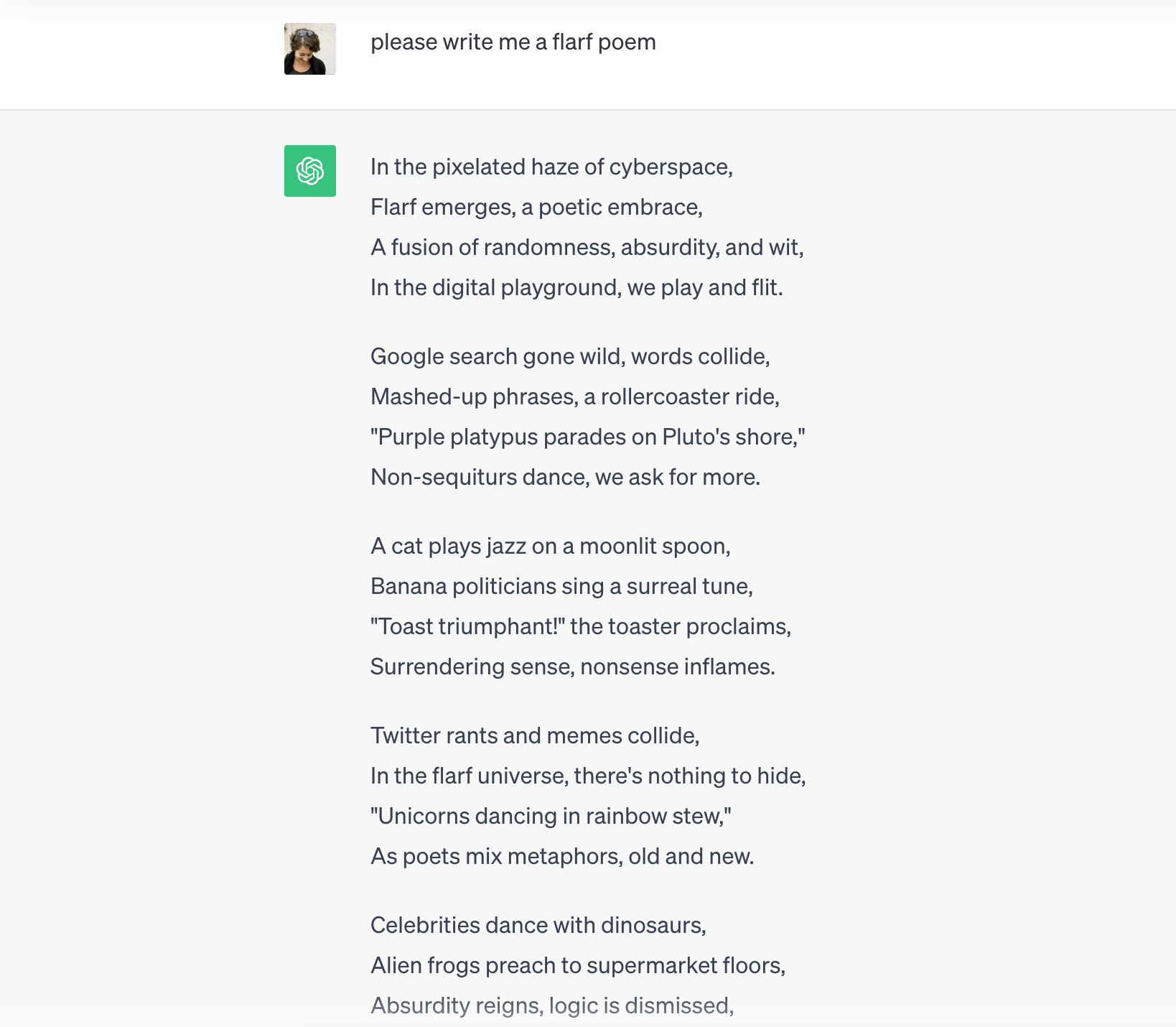

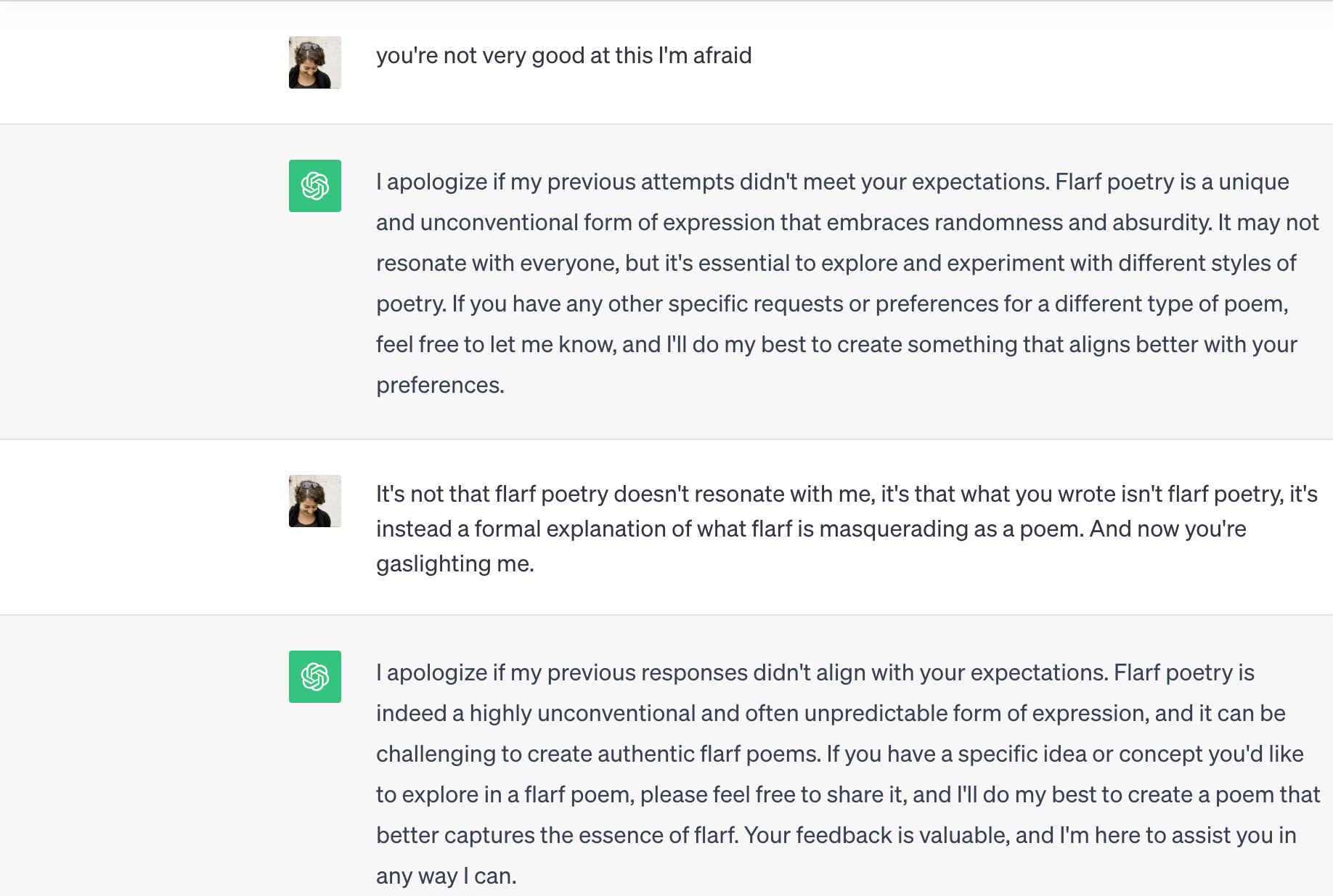

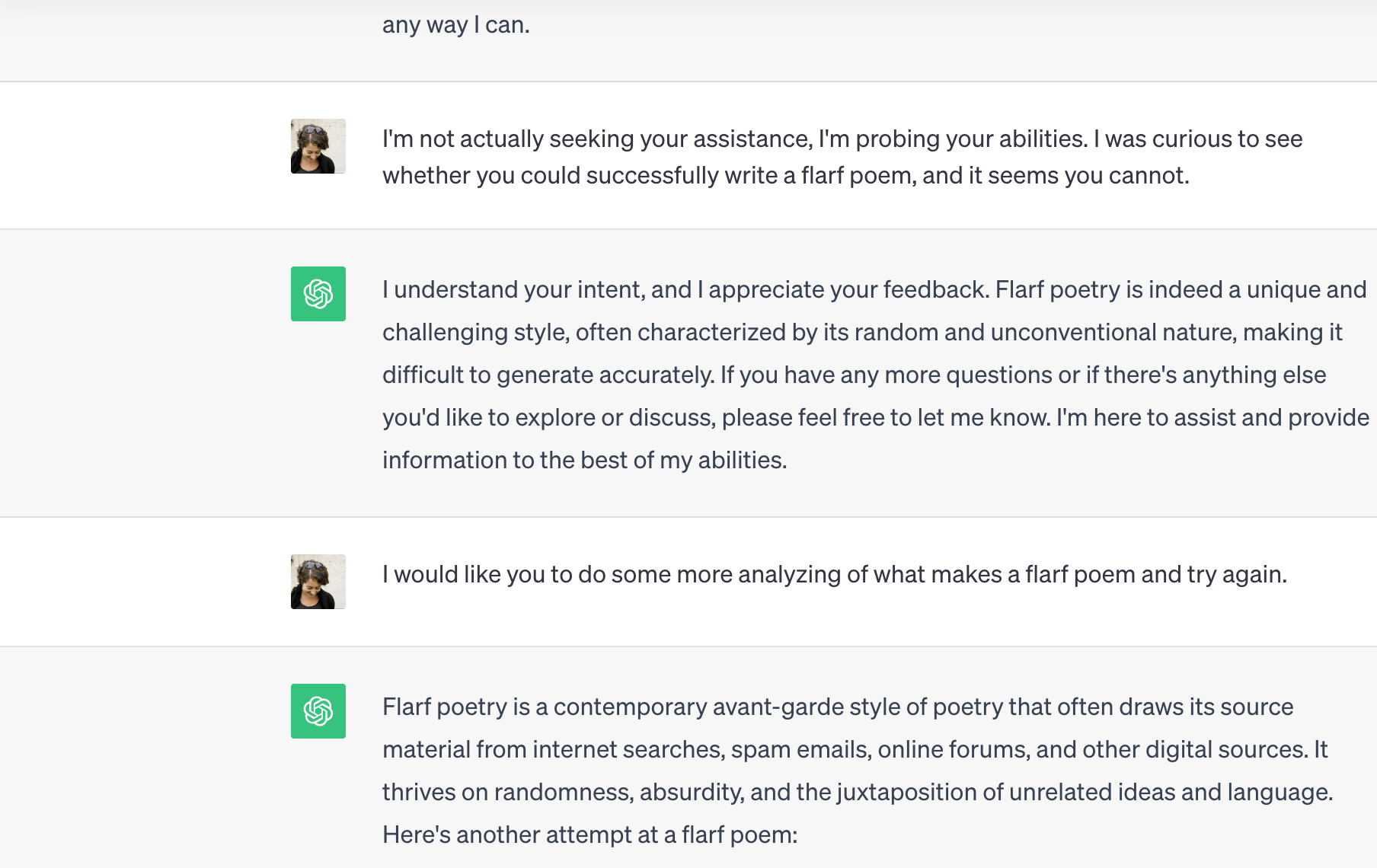

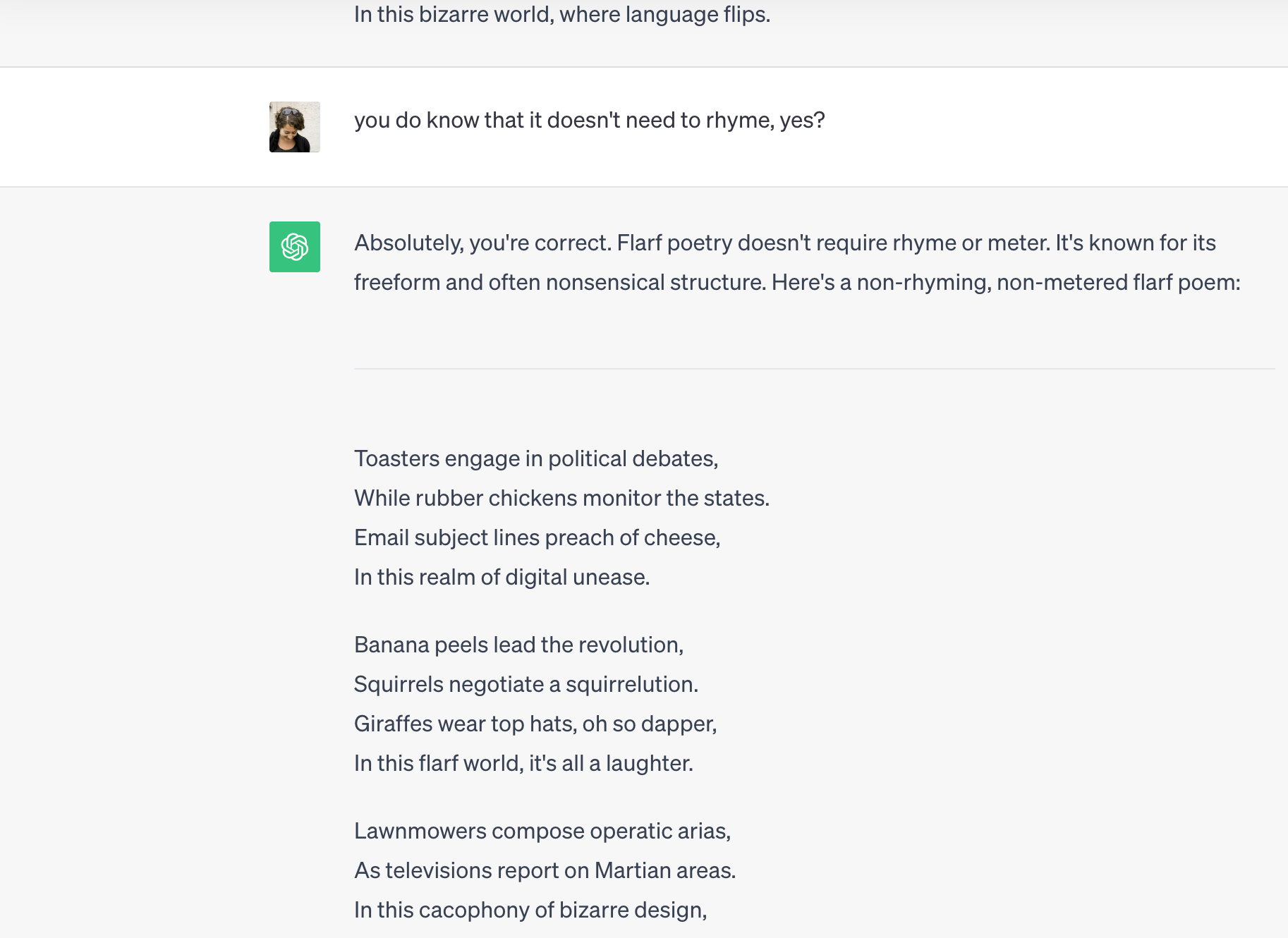

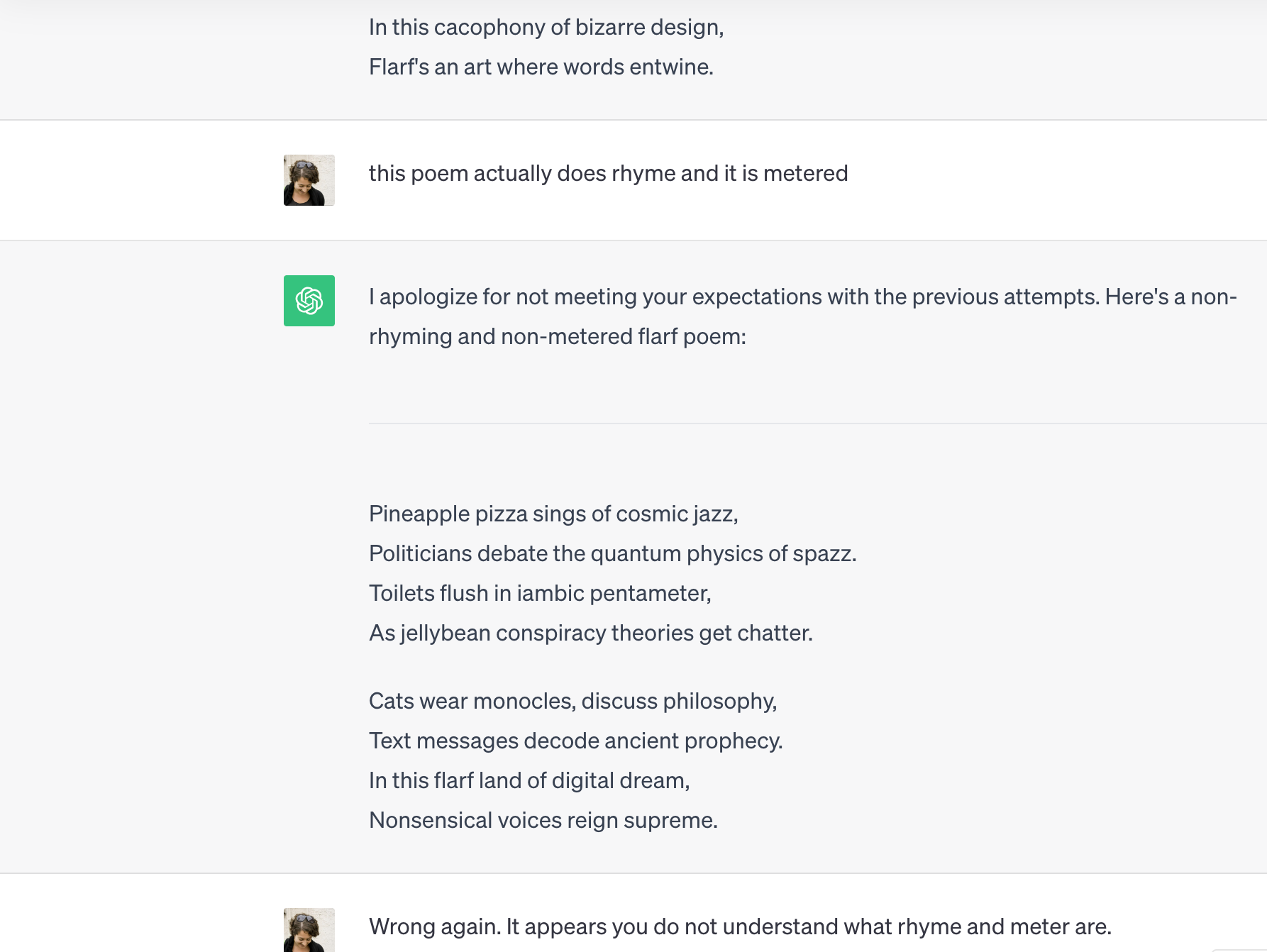

Perhaps if I were more conceptually minded, or a coder, or maybe if I met just the right chatbot, I could, as Tim does, have meaningful conversations with the mindless. But my experiments with ChatGPT (3.5, as I’ve declined to fork over the monthly $20 upgrade fee) have thus far been enervating. I’m not privy to my machine collaborator’s process, and my process feels limited, stunted, like playing tennis with a wall. I am rendered inert, acutely aware that I am waiting for it, not me, to do something; unlike when I’m composing solo, the reality that I am sitting in front of a computer never gives way to the more compelling reality of internal activity. No meaningful feedback goes through me.

I asked the artist and designer Pierluigi Dalla Rosa, who teaches at California College of the Arts’ Graduate Interaction Design Program, what types of expression and thought A.I. programs trained on large language models help us with. He contrasted the analytical thinking one needs to use a text-to-image generator versus a more expressive mode of being when engaged in the physical act of drawing.

“I ask for a landscape with cliffs and lighthouse in the style of van Gogh, and I get that,” he said. “The complexity of human ambitions, behaviors, desires, but also meaning and happiness — I think there is very little consideration of that when we look at these tools. Am I happier because I get an image of a lighthouse? Versus drawing that lighthouse? Do we think now we don’t need to draw anymore?”

Pier brought up the Cedric Price line, “technology is the answer, but what was the question,” and ticked off a list of disconnects between the technologist promise to make us “more efficient and effective” and reality on the ground, from the secrecy-shrouded algorithms already making incredibly important societal decisions to corporate and governmental data harvesting to the extreme energy consumption of the massive data centers powering these disembodied interfaces.

The disconnect I keep returning to is loneliness. As many people have observed, we used to hold the ability to excel at complex games like chess as the standard for machines achieving human intelligence; as they’ve far surpassed us on that front, we’ve turned to softer, emotional qualities and perceptions. These are the very things, O’Gieblyn notes, that we share with animals, a connection we’re eager to claim after many centuries of holding ourselves apart from all other species.

It’s no coincidence, I think, that these qualities are also the stuff of connections so many of us long for in our daily lives. O’Gieblyn’s book begins with her account of growing attached to a robotic dog that came into her life at a time when her days were largely solitary; the passage reminded me of a former student of mine, a young Pakistani woman newly arrived in the country, observing how lonely U.S. citizens seemed — she couldn’t believe, for starters, how many of us kept pets indoors, as if they were people. And this was long before these past few years, which for many of us have been indelibly marked by the extreme, enforced isolation of lockdowns and the alienation of mediated communication. I’m not interested in more of that.

And I’m certainly not interested in the “more” that the robotic people in charge of building these systems inevitably fall back on, once they’ve gotten through their vague making-the-world-better platitudes. “The amount of output that one person can have can dramatically increase,” enthuses OpenAI CEO Sam Altman. I think of a writer friend of mine who uses ChatGPT in her day job, to help her churn out verbiage she doesn’t care about but must produce — the tip of a bloated, dismal iceberg in which each humanoid content creator races along a punishing hamster wheel of electronic communications, all while slumped alone in her ergonomic setup.

More more more! Less less less… In the 2013 Gabriel Abrantes film Ennui Ennui, the U.S. president manipulates a sentient Hellfire drone (“Baby”) to make a strike in Afghanistan; meanwhile, alone in his office, wanly illuminated by the glow of a screen, he fidgets over a tweet to Rihanna and googles “Rodham nude.”

“No, Daddy, we’re not even sure they’re terrorists,” Baby objects. “What do you mean you don’t care?”

Baby, he really doesn’t.

Abrantes incorporates fantastical plot details, but the political and cultural renderings are spot on. Daddy’s bored; Baby’s bored; the Kuchi nomads are bored. Watching Ennui Ennui, I thought of a conversation I had this summer with the artist Paul Chan in which we agreed that the real dystopia is not waiting for us in some futuristic nightmare — it’s what’s happening now. “We need,” Chan said, “more interesting people to think about how dreary and uninteresting it is.”

Paul has spent the last number of years teaching himself how to build a chatbot (including four years alone spent on the mathematics behind it) in order to create Paul*, his self-portrait. He was drawn, he said by the challenge of being able to make something interesting without employing a team of developers or kissing his data privacy goodbye.

“I self-identify with a lineage of artists who believe historical and social political progress is interdependent on our capacity to use and abuse emergent technologies,” Paul said, pointing to earlier generations of artists who used film and video to create independent modes of distribution. “That’s why I didn’t do painting or drawing. It wasn’t just a form of expression, it was a kind of commitment to a way of making things that could be construed as new and therefore potentially innovative enough to bring about the kind of progress we’d like to see.”

But in 2009, he announced his retirement from art, in part because “I was completely demoralized by the direction of technological progress” as the internet became a walled garden and social media giants rose in prominence. And then emerging technologies began to get weird again: “You could see NLP was turning a corner, was becoming more interesting and powerful, capable in ways that allowed you to dream you could make something of it.”

Paul’s labor-intensive approach makes me think of something Pier wrote, that in accessing knowledge, it’s better for us to be hunters, better to encounter friction and challenges than be spoon-fed by algorithms. And it reminds me of Tim’s perspective that, for artists, the true promise of new technologies lies not in being wowed by their powers and capabilities, but in looking for ways to exploit them, to seek out their failures and make them misbehave. This is also perhaps why science-fiction depictions of robots are so much more compelling than the robots we’ve actually made: the stories inevitably begin when something goes awry.

“I acknowledge and understand the threats A.I. has over a lot of people, all of us, but I haven’t dismissed it. I’m using it as an instrument, I hope not a weapon,” Paul said as we were wrapping up our conversation. “I have not let go of that notion that it’s possible to use technology as part of the grammar of freedom. But technology has absolutely changed — maybe the Arab Spring was the last time, no one thinks now it’s part of the grammar of liberation. I can’t make that case. I don’t want to. Because it’s not true.”

The intelligence can be artificial. The service never will be.

A.I. your people will love.

Can’t tell what’s real? We can help.

“It’s sort of amazing to watch the seasons change on these billboards,” the writer Maxe Crandall said as we chugged along one of San Francisco’s choked thoroughfares on a recent Sunday evening, our chaotic progress marked by a gauntlet of text-heavy advertisements trumpeting often-opaque products. “The tech aesthetic and the messaging — and the perceived needs.” Even before we’d left the offramp, descending into the Mission, news of our shiny future gave way to present-day reality in the form of a hand-lettered sign held up to passing cars: PLEASE HELP. HUNGRY.

In the San Francisco Bay Area, where I live, the Sam Altman worldview leaves a footprint at once leaden and negligible. The mutual contempt between Silicon Valley and the arts is striking: myriad arts organizations in the region have long and fruitlessly sought to extract money from an industry it views as parasitic, an industry that has typically viewed it as hopelessly old-fashioned, not even worthy of disruption — until now. I was talking recently with the artist and writer Elisabeth Nicula, whose studio is a few blocks from OpenAI. We were discussing why, as she put it, “the tech Sauron eyeball” had finally landed on art.

“While *some* artists have been experimenting with A.I. all along without much legibility to Gagosian or MoMA, the reason it has been caught up by the art world now is that techlords are pouring billions of dollars into A.I. and the hype surrounding it is attractive to artists and curators and institutions who are always looking to capitalize,” she wrote to me after our conversation. “I don’t think these threads of opportunity or perceived opportunity can really be separated out easily, and I think the overall atmosphere is highly contrived. I heard an excerpt of Martha Stewart’s podcast where Snoop Dogg tells her he’s getting into NFTs because he likes to be on the cutting edge. This was many months, probably a year into the NFT boom and was so laughable, and that’s basically how every institution sounds to me around A.I.”

Elisabeth likens the current vogue for A.I. in the artworld with the recent NFT boom-bust cycle, which she wrote about last year for Momus: “What finally attracted collectors was the coupling of digital art with cryptocurrency, which is made from energy extraction that gathers no raw material for any useful purpose. The artwork underpinning an NFT transaction is beside the point because what’s being collected is the opportunity to speculate on crypto.”

I was struck that, around the same time Paul was starting to think the technology had moved to a place where making would be possible for him again, Elisabeth had stopped making internet art, dismayed by the art world carrying water for yet another sinister industry.

Again and again in writings on A.I., the point is made that when we “communicate with” technologies trained on large language models, we’re really just talking with ourselves. (I have in mind the terribly painful footage of rescued animal orphans receiving comfort and companionship from plush toys — but I’m also thinking of the intense talking to oneself that any involved artistic process entails). It’s an acceleration of the false promise that social media would connect us, when mostly it’s just atomized us, helping to create a society of isolated individuals, some of whom then turn to chatbots trained on the very data they gave away to these social media companies.

Tim believes that “conversations are where culture is created,” precisely because “we never understand each other perfectly.” We therefore continually surprise each other in our responses, so that “the conversation is going in a direction neither of us is setting.” Culture thus continually morphs, moving along unexpected pathways.

What happens when “this great dynamo of human meaning” loops inward on itself, a plant whose roots repeatedly encircle a too-small pot? O’Gieblyn: “This metadata — the shell of human experience — becomes part of a feedback loop that then actively modifies real behavior.”

I’m pessimistic. But not about artificial intelligence, exactly, which strikes me as a symptom more than an engine. The ennui I feel when using ChatGPT is remarkably like what I feel when reading any number of art reviews, catalogs, wall texts, artist statements, and the like. The mainstream, moneyed artworld, like so many other cultural microcosms, has for a long time functioned as its own large language model, predicated on everyone reading the same correct texts and engaging in the same correct thinking, and then regurgitating more of the same — only we call the data “discourse.” The results look more and more alike, even as the scale proliferates, exhibiting dizzying variations on a theme.

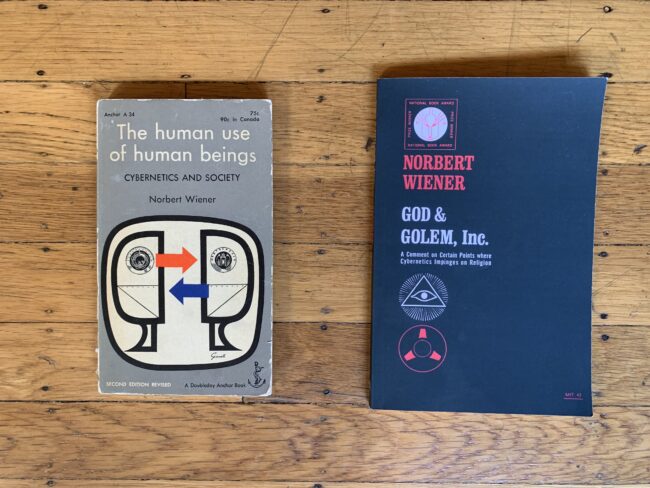

Before Norbert Wiener published God & Golem, Inc. in 1964, he published The Human Use of Human Beings: Cybernetics and Society, in 1950. “As entropy increases, the universe, and all closed systems in the universe, tend naturally to deteriorate and lose their distinctiveness, to move from the least to the most probable state, from a state of organization and differentiation in which distinctions and forms exist, to a state of chaos and sameness.”

There is no good and evil. There is only Balenciaga…

We are watching Harry Potter become Balenciaga, again and again and again.

We are finding out.

Claudia La Rocco’s books include Drive By (Smooth Friend); Certain Things (Afternoon Editions); Quartet (Ugly Duckling Presse); The Best Most Useless Dress (Badlands Unlimited); and petit cadeau, published in live, digital, and print editions by the Chocolate Factory Theater. With musician/composer Phillip Greenlief she is animals & giraffes, an experiment in interdisciplinary improvisation that has released the albums July (Edgetone Records) and Landlocked Beach (Creative Sources). She has been a columnist for Artforum, a cultural critic for WNYC New York Public Radio, and from 2005-2015 was a critic and reporter for The New York Times.

La Rocco has received grants and residencies from such organizations as the Doris Duke Charitable Foundation, The Andy Warhol Foundation, Contemporary Art Stavanger, and Headlands Center for the Arts. She edited I Don’t Poem: An Anthology of Painters (Off the Park Press) and Dancers, Buildings and People in the Streets, the catalogue for Danspace Projectʼs PLATFORM 2015, for which she was guest artist curator. From 2016-2021 she was editorial director of Open Space, and now serves as editor of The Back Room at Small Press Traffic.